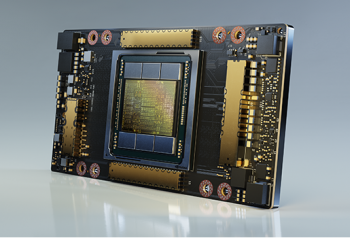

Super Micro Computer, Inc., a global leader in enterprise computing, storage, networking solutions and green computing technology, announced two new systems designed for artificial intelligence (AI) deep learning applications that fully leverage the third-generation NVIDIA HGX™ technology with the new NVIDIA A100™ Tensor Core GPUs as well as full support for the new NVIDIA A100 GPUs across the company’s broad portfolio of 1U, 2U, 4U and 10U GPU servers. NVIDIA A100 is the first elastic, multi-instance GPU that unifies training, inference, HPC, and analytics.

“Expanding upon our industry-leading portfolio of GPU systems and NVIDIA HGX-2 system technology, Supermicro is introducing a new 2U system implementing the new NVIDIA HGX™ A100 4 GPU board (formerly codenamed Redstone) and a new 4U system based on the new NVIDIA HGX A100 8 GPU board (formerly codenamed Delta) delivering 5 PetaFLOPS of AI performance,” said Charles Liang, CEO and president of Supermicro. “As GPU accelerated computing evolves and continues to transform data centers, Supermicro will provide customers the very latest system advancements to help them achieve maximum acceleration at every scale while optimizing GPU utilization. These new systems will significantly boost performance on all accelerated workloads for HPC, data analytics, deep learning training and deep learning inference.”

As a balanced data centre platform for HPC and AI applications, Supermicro’s new 2U system leverages the NVIDIA HGX A100 4 GPU board with four direct-attached NVIDIA A100 Tensor Core GPUs using PCI-E 4.0 for maximum performance and NVIDIA NVLink™ for high-speed GPU-to-GPU interconnects. This advanced GPU system accelerates compute, networking and storage performance with support for one PCI-E 4.0 x8 and up to four PCI-E 4.0 x16 expansion slots for GPUDirect RDMA high-speed network cards and storage such as InfiniBand™ HDR™, which supports up to 200Gb per second bandwidth.

“AI models are exploding in complexity as they take on next-level challenges such as accurate conversational AI, deep recommender systems and personalized medicine,” said Ian Buck, general manager and VP of accelerated computing at NVIDIA. “By implementing the NVIDIA HGX A100 platform into their new servers, Supermicro provides customers the powerful performance and massive scalability that enable researchers to train the most complex AI networks at unprecedented speed.”

Supermicro’s new 4U system supports eight A100 Tensor Core GPUs optimised for AI and machine learning. For customers that want to scale their deployment, the 4U form factor with eight GPUs is ideal as their processing requirements expand. The new 4U system will have one NVIDIA HGX A100 8 GPU board with eight A100 GPUs all-to-all connected with NVIDIA NVSwitch™ for up to 600GB per second GPU-to-GPU bandwidth and eight expansion slots for GPUDirect RDMA high-speed network cards. Data centres can use this scale-up platform to create next-gen AI which is ideal for deep learning training, and maximise data scientists’ productivity with support for ten x16 expansion slots.

Customers can expect a significant performance boost across Supermicro’s extensive portfolio of 1U, 2U, 4U and 10U multi-GPU servers when they are equipped with the new NVIDIA A100 GPUs. Supermicro’s new A+ GPU system supports up to eight full-height double-wide (or single-wide) GPUs via direct-attach PCI-E 4.0 x16 CPU-to-GPU lanes for maximum acceleration, without any PCI-E switch for the lowest latency and highest bandwidth. The system also supports up to three additional high-performance PCI-E 4.0 expansion slots for a variety of uses, which includes high-performance networking connectivity up to 100G. An additional AIOM slot supports a Supermicro AIOM card or an OCP 3.0 mezzanine card.

Supermicro, the leader in AI system technology, offers multi-GPU optimised thermal designs that provide the highest performance and reliability for AI, Deep Learning, and HPC applications. With 1U, 2U, 4U, and 10U rackmount GPU systems; Utra, BigTwin™, and embedded systems supporting GPUs; as well as GPU blade modules for our 8U SuperBlade® , Supermicro offers the industry’s widest and deepest selection of GPU systems to power applications from Edge to Cloud.

Supermicro plans to add the new NVIDIA EGX™ A100 configuration to its edge server portfolio to deliver enhanced security and unprecedented performance at the edge. The EGX A100 converged accelerator combines an NVIDIA Mellanox SmartNIC with GPUs powered by the new NVIDIA Ampere architecture, so enterprises can run AI at the edge more securely.

(0)

(0) (0)

(0)Archive

- October 2024(44)

- September 2024(94)

- August 2024(100)

- July 2024(99)

- June 2024(126)

- May 2024(155)

- April 2024(123)

- March 2024(112)

- February 2024(109)

- January 2024(95)

- December 2023(56)

- November 2023(86)

- October 2023(97)

- September 2023(89)

- August 2023(101)

- July 2023(104)

- June 2023(113)

- May 2023(103)

- April 2023(93)

- March 2023(129)

- February 2023(77)

- January 2023(91)

- December 2022(90)

- November 2022(125)

- October 2022(117)

- September 2022(137)

- August 2022(119)

- July 2022(99)

- June 2022(128)

- May 2022(112)

- April 2022(108)

- March 2022(121)

- February 2022(93)

- January 2022(110)

- December 2021(92)

- November 2021(107)

- October 2021(101)

- September 2021(81)

- August 2021(74)

- July 2021(78)

- June 2021(92)

- May 2021(67)

- April 2021(79)

- March 2021(79)

- February 2021(58)

- January 2021(55)

- December 2020(56)

- November 2020(59)

- October 2020(78)

- September 2020(72)

- August 2020(64)

- July 2020(71)

- June 2020(74)

- May 2020(50)

- April 2020(71)

- March 2020(71)

- February 2020(58)

- January 2020(62)

- December 2019(57)

- November 2019(64)

- October 2019(25)

- September 2019(24)

- August 2019(14)

- July 2019(23)

- June 2019(54)

- May 2019(82)

- April 2019(76)

- March 2019(71)

- February 2019(67)

- January 2019(75)

- December 2018(44)

- November 2018(47)

- October 2018(74)

- September 2018(54)

- August 2018(61)

- July 2018(72)

- June 2018(62)

- May 2018(62)

- April 2018(73)

- March 2018(76)

- February 2018(8)

- January 2018(7)

- December 2017(6)

- November 2017(8)

- October 2017(3)

- September 2017(4)

- August 2017(4)

- July 2017(2)

- June 2017(5)

- May 2017(6)

- April 2017(11)

- March 2017(8)

- February 2017(16)

- January 2017(10)

- December 2016(12)

- November 2016(20)

- October 2016(7)

- September 2016(102)

- August 2016(168)

- July 2016(141)

- June 2016(149)

- May 2016(117)

- April 2016(59)

- March 2016(85)

- February 2016(153)

- December 2015(150)