Confluent, Inc., the data streaming pioneer, has announced AI (Artificial Intelligence) Model Inference, an upcoming feature on Confluent Cloud for Apache Flink ®, to enable teams to easily incorporate Machine Learning (ML) into data pipelines.

Confluent previously introduced Confluent Platform for Apache Flink ®, a Flink distribution that enables stream processing in on-premises or hybrid environments with support from the company’s Flink experts. It also unveiled Freight clusters, a new cluster type for Confluent Cloud that provides a cost-effective way to handle large-volume use cases that are not time-sensitive, such as logging or telemetry data.

AI Model Inference on Apache Flink Simplifies Building and Launching AI and ML Applications

Generative AI helps organisations innovate faster and deliver more tailored customer experiences. AI workloads need fresh, context-rich data to ensure the underlying models generate accurate output and results for businesses to make informed decisions based on the most current information available. However, developers often have to use several tools and languages to work with AI models and data processing pipelines, leading to complex and fragmented workloads.

This can make it challenging to leverage the most current and relevant data for decision-making, leading to errors or inconsistencies and compromising the accuracy and reliability of AI-driven insights. These issues can cause increased development time and difficulties in maintaining and scaling AI applications.

With AI Model Inference in Confluent Cloud for Apache Flink ®, organisations can use simple SQL statements from within Apache Flink to make calls to AI engines, including OpenAI, AWS SageMaker, GCP Vertex, and Microsoft Azure.

Now, enterprises can orchestrate data cleaning and processing tasks on a single platform.

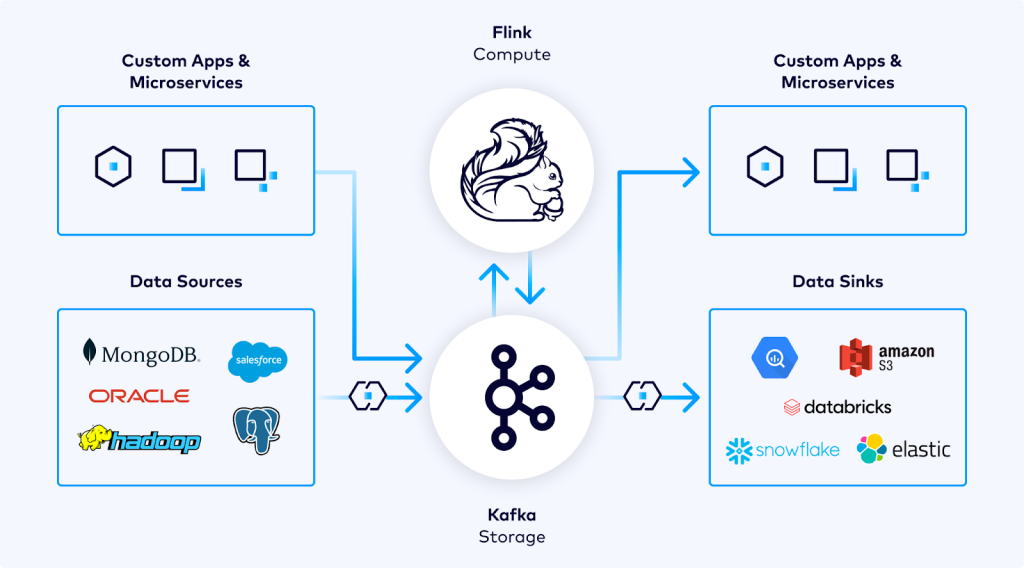

“Apache Kafka and Flink are the critical links to fuel machine learning and artificial intelligence applications with the most timely and accurate data,” said Shaun Clowes, Chief Product Officer at Confluent. “Confluent’s AI Model Inference removes the complexity involved when using streaming data for AI development by enabling organisations to innovate faster and deliver powerful customer experiences.” AI Model Inference enables companies to:

- Simplify AI development by using familiar SQL syntax to work directly with AI/ML models, reducing the need for specialised tools and languages.

- Establish seamless coordination between data processing and AI workflows to improve efficiency and reduce operational complexity.

- Enable accurate, real-time AI-driven decision-making by leveraging fresh, contextual streaming data.

“Leveraging fresh, contextual data is paramount for training and refining AI models, and for use at time of inference to improve the accuracy and relevancy of outcomes, ” said Stewart Bond, Vice President, Data Intelligence and Integration Software, at IDC. “Organisations need to improve efficiencies of AI processing by unifying data integration and processing pipelines with AI models. Flink can now treat foundational models as first-class resources, enabling the unification of real-time data processing with AI tasks to streamline workflows, enhance efficiency, and reduce operational complexity. These capabilities empower organizations to make accurate, real-time AI-driven decisions based on the most current and relevant streaming data while enhancing performance and value.”

Support for AI Model Inference is currently available in early access to select customers. Customers can sign up for early access to learn more about this offering.

Enabling Stream Processing in Private Clouds and On-Prem Environments

Many organisations are looking for hybrid solutions to protect more sensitive workloads. With Confluent Platform for Apache Flink ®, a Flink distribution fully supported by Confluent, customers can easily leverage stream processing for on-prem or private cloud workloads with long-term expert support. Apache Flink can be used in tandem with Confluent Platform with minimal changes to existing Flink jobs and architecture.

Confluent Platform for Apache Flink ® can help organisations:

- Minimise risk with unified Flink and Kafka support and expert guidance from the foremost experts in the data streaming industry.

- Receive timely assistance in troubleshooting and resolving issues, reducing the impact of any operational disruptions to mission-critical applications.

- Ensure that stream processing applications are secure and up to date with off-cycle bug and vulnerability fixes.

With Kafka and Flink available in Confluent’s complete data streaming platform, organisations can ensure better integration and compatibility between technologies, and receive comprehensive support for streaming workloads across all environments.

Unlike open source Apache Flink, which only maintains the two most recent releases, Confluent offers three years of support for every Confluent Platform for Apache Flink ® release from launch, guaranteeing uninterrupted operations and peace of mind. Confluent Platform for Apache Flink ® will be available to Confluent customers later this year.

New Auto-Scaling Freight Clusters Offer More Cost-Efficiency at Scale

Many organisations use Confluent Cloud to process logging and telemetry data. These use cases involve large amounts of business-critical data but are often less latency-sensitive since they typically feed into indexing or batch aggregation engines.

To make these common use cases more cost-efficient for customers, Confluent is introducing Freight clusters—a new serverless cluster type with up to 90% lower cost for high-throughput use cases with relaxed latency requirements.

Powered by Elastic CKUs, Freight clusters seamlessly auto-scale based on demand with no manual sizing or capacity planning required, enabling organisations to minimize operational overhead and optimise costs by paying only for the resources that they use when they need it. Freight clusters are available in early access in select AWS regions.

Customers can sign up for early access to learn more about this offering.

(0)

(0) (0)

(0)Archive

- October 2024(44)

- September 2024(94)

- August 2024(100)

- July 2024(99)

- June 2024(126)

- May 2024(155)

- April 2024(123)

- March 2024(112)

- February 2024(109)

- January 2024(95)

- December 2023(56)

- November 2023(86)

- October 2023(97)

- September 2023(89)

- August 2023(101)

- July 2023(104)

- June 2023(113)

- May 2023(103)

- April 2023(93)

- March 2023(129)

- February 2023(77)

- January 2023(91)

- December 2022(90)

- November 2022(125)

- October 2022(117)

- September 2022(137)

- August 2022(119)

- July 2022(99)

- June 2022(128)

- May 2022(112)

- April 2022(108)

- March 2022(121)

- February 2022(93)

- January 2022(110)

- December 2021(92)

- November 2021(107)

- October 2021(101)

- September 2021(81)

- August 2021(74)

- July 2021(78)

- June 2021(92)

- May 2021(67)

- April 2021(79)

- March 2021(79)

- February 2021(58)

- January 2021(55)

- December 2020(56)

- November 2020(59)

- October 2020(78)

- September 2020(72)

- August 2020(64)

- July 2020(71)

- June 2020(74)

- May 2020(50)

- April 2020(71)

- March 2020(71)

- February 2020(58)

- January 2020(62)

- December 2019(57)

- November 2019(64)

- October 2019(25)

- September 2019(24)

- August 2019(14)

- July 2019(23)

- June 2019(54)

- May 2019(82)

- April 2019(76)

- March 2019(71)

- February 2019(67)

- January 2019(75)

- December 2018(44)

- November 2018(47)

- October 2018(74)

- September 2018(54)

- August 2018(61)

- July 2018(72)

- June 2018(62)

- May 2018(62)

- April 2018(73)

- March 2018(76)

- February 2018(8)

- January 2018(7)

- December 2017(6)

- November 2017(8)

- October 2017(3)

- September 2017(4)

- August 2017(4)

- July 2017(2)

- June 2017(5)

- May 2017(6)

- April 2017(11)

- March 2017(8)

- February 2017(16)

- January 2017(10)

- December 2016(12)

- November 2016(20)

- October 2016(7)

- September 2016(102)

- August 2016(168)

- July 2016(141)

- June 2016(149)

- May 2016(117)

- April 2016(59)

- March 2016(85)

- February 2016(153)

- December 2015(150)