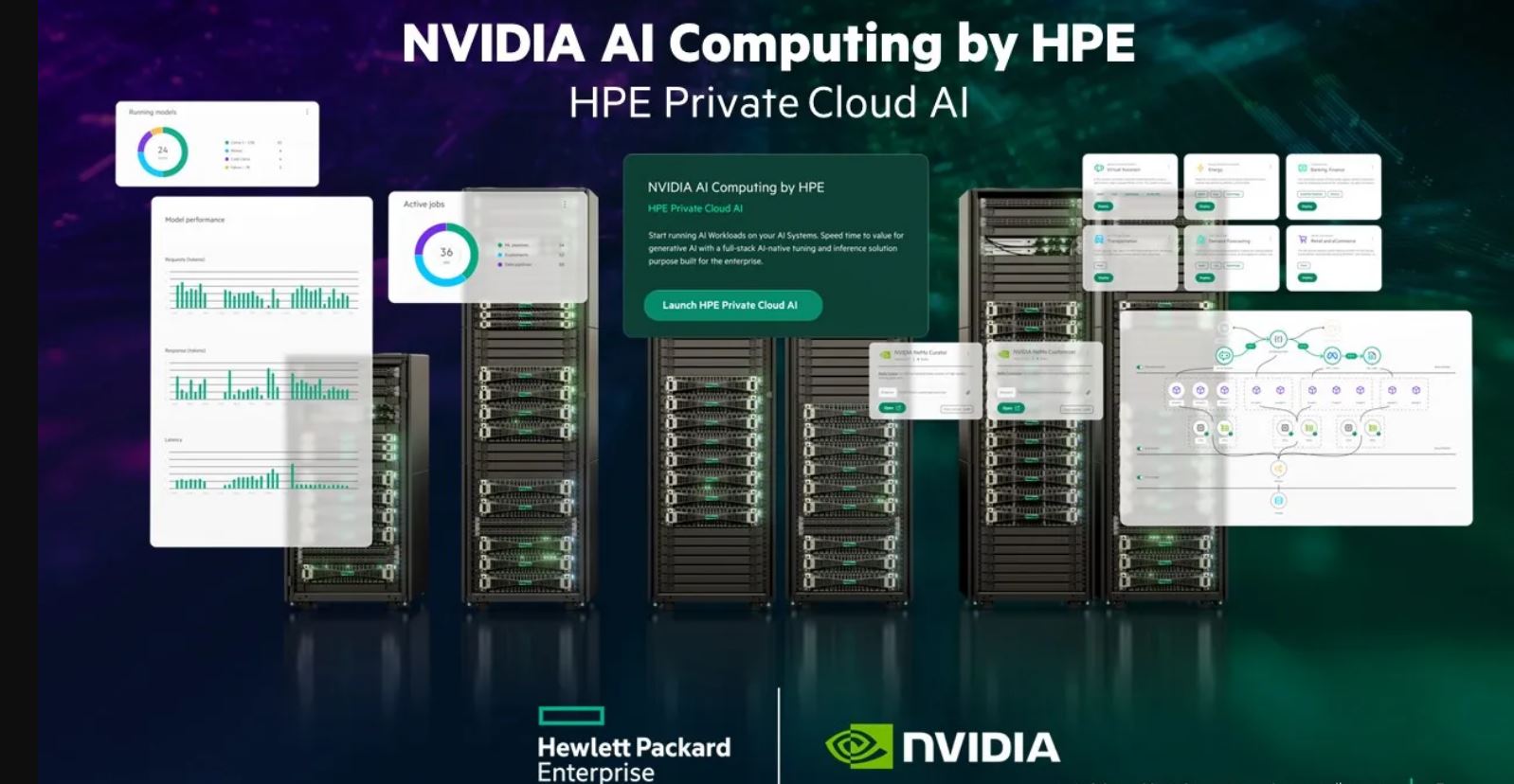

Hewlett Packard Enterprise and NVIDIA have announced NVIDIA AI Computing by HPE, a portfolio of co-developed Artificial Intelligence (AI) solutions and joint go-to-market integrations that enable enterprises to accelerate the adoption of generative AI.

Among NVIDIA AI Computing by HPE’s key offerings is HPE Private Cloud AI, a first-of-its-kind solution that provides the deepest integration to date of NVIDIA AI computing, networking, and software with HPE’s AI storage, compute, and HPE GreenLake cloud. The offering enables enterprises of every size to gain an energy-efficient, fast, and flexible path for sustainably developing and deploying generative AI applications.

Powered by the new OpsRamp AI copilot that helps IT operations improve workload and IT efficiency, HPE Private Cloud AI includes a self-service cloud experience with full lifecycle management and is available in four right-sized configurations to support a broad range of AI workloads and use cases.

All NVIDIA AI Computing by HPE offerings and services will be available through a joint go-to-market strategy that spans sales teams and channel partners, training, and a global network of system integrators—including Deloitte, HCLTech, Infosys, TCS, and Wipro—that can help enterprises across a variety of industries run complex AI workloads.

NVIDIA AI Computing by HPE was announced during the HPE Discover keynote by HPE President and CEO Antonio Neri, who was joined by NVIDIA Founder and CEO Jensen Huang, NVIDIA AI Computing by HPE marks the expansion of a decades-long partnership and reflects the substantial commitment of time and resources from each company.

“Generative AI holds immense potential for enterprise transformation, but the complexities of fragmented AI technology contain too many risks and barriers that hamper large-scale enterprise adoption and can jeopardize a company’s most valuable asset – its proprietary data,” said Neri. “To unleash the immense potential of generative AI in the enterprise, HPE and NVIDIA co-developed a turnkey private cloud for AI that will enable enterprises to focus their resources on developing new AI use cases that can boost productivity and unlock new revenue streams.”

“Generative AI and accelerated computing are fuelling a fundamental transformation as every industry races to join the industrial revolution,” said Huang. “Never before have NVIDIA and HPE integrated our technologies so deeply—combining the entire NVIDIA AI computing stack along with HPE’s private cloud technology—to equip enterprise clients and AI professionals with the most advanced computing infrastructure and services to expand the frontier of AI.”

NVIDIA AI Computing by HPE: A Co-Developed Private Cloud AI Portfolio

HPE Private Cloud AI delivers a unique, cloud-based experience to accelerate innovation and return on investment while managing enterprise risk from AI. The solution offers:

- Support for inference, fine-tuning and RAG AI workloads that utilize proprietary data.

- Enterprise control for data privacy, security, transparency, and governance requirements.

- Cloud experience with ITOps and AIOps capabilities to increase productivity.

- Fast path to consume flexibly to meet future AI opportunities and growth.

- Curated AI and data software stack in HPE Private Cloud AI

The foundation of the AI and data software stack starts with the NVIDIA AI Enterprise software platform, which includes NVIDIA NIM™ inference microservices.

NVIDIA AI Enterprise accelerates data science pipelines and streamlines the development and deployment of production-grade copilots and other GenAI applications. Included with NVIDIA AI Enterprise, NVIDIA NIM delivers easy-to-use microservices for optimized AI model inferencing offering a smooth transition from prototype to secure deployment of AI models in a variety of use cases.

Complementing NVIDIA AI Enterprise and NVIDIA NIM, HPE AI Essentials software delivers a ready-to-run set of curated AI and data foundation tools with a unified control plane that provides adaptable solutions, ongoing enterprise support, and trusted AI services, such as data and model compliance and extensible features that ensure AI pipelines are in compliance, explainable and reproducible throughout the AI lifecycle.

To deliver optimal performance for the AI and data software stack, HPE Private Cloud AI delivers a fully integrated AI infrastructure stack that includes NVIDIA Spectrum-X™ Ethernet networking, HPE GreenLake for File Storage, and HPE ProLiant servers with support for NVIDIA L40S, NVIDIA H100 NVL Tensor Core GPUs and the NVIDIA GH200 NVL2 platform.

Cloud Experience Enabled by HPE GreenLake Cloud

HPE Private Cloud AI offers a self-service cloud experience enabled by HPE GreenLake cloud. Through a single, platform-based control plane, HPE Greenlake cloud services provide manageability and observability to automate, orchestrate, and manage endpoints, workloads, and data across hybrid environments. This includes sustainability metrics for workloads and endpoints.

HPE GreenLake Cloud and OpsRamp AI Infrastructure Observability and Copilot Assistant

OpsRamp’s IT operations are integrated with HPE GreenLake cloud to deliver observability and AIOps to all HPE products and services. OpsRamp now provides observability for the end- to- end NVIDIA accelerated computing stack, including NVIDIA NIM and AI software, NVIDIA Tensor Core GPUs, and AI clusters as well as NVIDIA Quantum InfiniBand and NVIDIA Spectrum Ethernet switches. IT administrators can gain insights to identify anomalies and monitor their AI infrastructure and workloads across hybrid, multi-cloud environments.

The new OpsRamp operations copilot utilises NVIDIA’s accelerated computing platform to analyse large datasets for insights with a conversational assistant, boosting productivity for operations management. OpsRamp will also integrate with CrowdStrike APIs so customers can see a unified service map view of endpoint security across their entire infrastructure and applications.

Accelerate Time to Value with AI

To advance the time to value for enterprises to develop industry-focused AI solutions and use cases with clear business benefits, Deloitte, HCLTech, Infosys, TCS, and WIPRO announced their support of the NVIDIA AI Computing by HPE portfolio and HPE Private Cloud AI as part of their strategic AI solutions and services.

HPE Adds Support for NVIDIA’s Latest Offerings

- HPE Cray XD670 supports eight NVIDIA H200 NVL Tensor Core GPUs and is ideal for LLM builders.

- HPE ProLiant DL384 Gen12 server with NVIDIA GH200 NVL2 is ideal for LLM consumers using larger models or RAG.

- HPE ProLiant DL380a Gen12 server support for up to eight NVIDIA H200 NVL Tensor Core GPUs is ideal for LLM users looking for flexibility to scale their GenAI workloads.

- HPE will be time-to-market to support the NVIDIA GB200 NVL72 / NVL2, as well as the new NVIDIA Blackwell, NVIDIA Rubin, and NVIDIA Vera architectures.

High-Density File Storage Certified for NVIDIA DGX BasePOD and NVIDIA OVX Systems

HPE GreenLake for File Storage has achieved NVIDIA DGX BasePOD certification and NVIDIA OVX™ storage validation, providing customers with a proven enterprise file storage solution for accelerating AI, GenAI and GPU-intensive workloads at scale. HPE will be a time-to-market partner on upcoming NVIDIA reference architecture storage certification programs.

Availability

- HPE Private Cloud AI is expected to be generally available in the fall.

- HPE ProLiant DL380a Gen12 server with NVIDIA H200 NVL Tensor Core GPUs is expected to be generally available in the fall.

- HPE ProLiant DL384 Gen12 server with dual NVIDIA GH200 NVL2 is expected to be generally available in the fall.

- HPE Cray XD670 server with NVIDIA H200 NVL is expected to be generally available in the summer.

(0)

(0) (0)

(0)Archive

- October 2024(44)

- September 2024(94)

- August 2024(100)

- July 2024(99)

- June 2024(126)

- May 2024(155)

- April 2024(123)

- March 2024(112)

- February 2024(109)

- January 2024(95)

- December 2023(56)

- November 2023(86)

- October 2023(97)

- September 2023(89)

- August 2023(101)

- July 2023(104)

- June 2023(113)

- May 2023(103)

- April 2023(93)

- March 2023(129)

- February 2023(77)

- January 2023(91)

- December 2022(90)

- November 2022(125)

- October 2022(117)

- September 2022(137)

- August 2022(119)

- July 2022(99)

- June 2022(128)

- May 2022(112)

- April 2022(108)

- March 2022(121)

- February 2022(93)

- January 2022(110)

- December 2021(92)

- November 2021(107)

- October 2021(101)

- September 2021(81)

- August 2021(74)

- July 2021(78)

- June 2021(92)

- May 2021(67)

- April 2021(79)

- March 2021(79)

- February 2021(58)

- January 2021(55)

- December 2020(56)

- November 2020(59)

- October 2020(78)

- September 2020(72)

- August 2020(64)

- July 2020(71)

- June 2020(74)

- May 2020(50)

- April 2020(71)

- March 2020(71)

- February 2020(58)

- January 2020(62)

- December 2019(57)

- November 2019(64)

- October 2019(25)

- September 2019(24)

- August 2019(14)

- July 2019(23)

- June 2019(54)

- May 2019(82)

- April 2019(76)

- March 2019(71)

- February 2019(67)

- January 2019(75)

- December 2018(44)

- November 2018(47)

- October 2018(74)

- September 2018(54)

- August 2018(61)

- July 2018(72)

- June 2018(62)

- May 2018(62)

- April 2018(73)

- March 2018(76)

- February 2018(8)

- January 2018(7)

- December 2017(6)

- November 2017(8)

- October 2017(3)

- September 2017(4)

- August 2017(4)

- July 2017(2)

- June 2017(5)

- May 2017(6)

- April 2017(11)

- March 2017(8)

- February 2017(16)

- January 2017(10)

- December 2016(12)

- November 2016(20)

- October 2016(7)

- September 2016(102)

- August 2016(168)

- July 2016(141)

- June 2016(149)

- May 2016(117)

- April 2016(59)

- March 2016(85)

- February 2016(153)

- December 2015(150)