Written by: Izzat Najmi Abdullah, Journalist, AOPG.

The impact of AI on content creation has been particularly evident in the scientific community, where the pressure to publish has led some researchers to turn to AI tools like ChatGPT to expedite the writing process. However, the use of AI in scientific writing has not been without its significant drawbacks.

For starters, AI has its tendency to generate inaccurate content—a phenomenon known as AI Hallucination—which in most cases can cause quite the embarrassment if caught, and not to forget confusion within the academic world, all while you are on your journey trying to publish reputable journals.

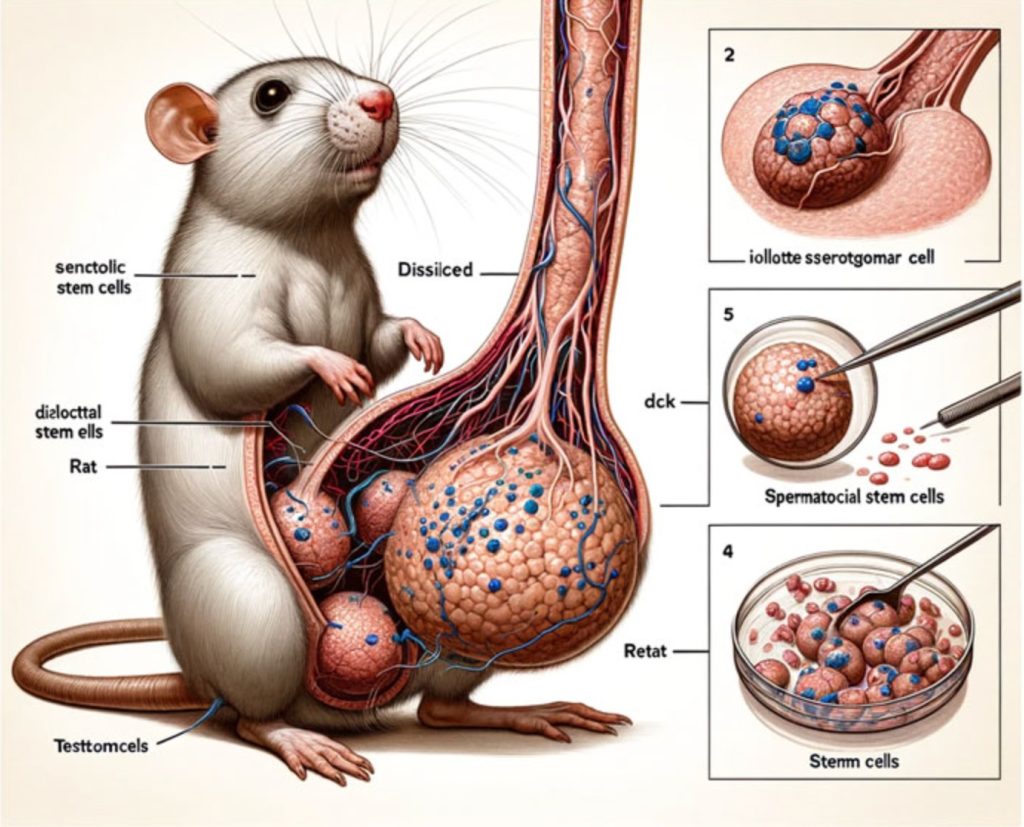

One particularly striking example is an AI-generated infographic of a rat with an unusually large “anatomical feature” (apologies if this is a bit unsettling), which was published in a well-known academic journal before being retracted. Similarly, another study included a horror graphic of human legs with multiple jointed bones that resembled hands—another glaring AI error that was published and quickly retracted. These incidents highlight the dangers of relying on AI-generated content without thorough verification.

The illustrated photo taken from the now retracted study – photo credit: The Telegraph

Andrew Gray, a librarian at University College London, conducted an analysis of papers on AI influence and found that in 2023 alone, over 60,000 papers involved the use of AI—accounting for more than one per cent of the annual total. The sheer volume of AI-generated content in academic research is alarming, and as AI tools continue to advance, this problem is expected to grow.

The Rise of “Slop” Content

The term “slop” has emerged to describe the vast amounts of low-quality, unreliable content generated by AI. This content, often produced with little regard for accuracy, includes everything from bogus articles to dubious social media posts and poorly written e-books. The phenomenon of slop highlights not only the ease with which AI can produce content but also underscores the potential dangers of flooding the Internet with subpar material.

The New York Times recently popularised the term, drawing attention to the growing prevalence of AI-generated content that is both easy and inexpensive to produce. An American journalist, for example, was able to create a fully automated political disinformation site, capable of churning out dozens of fake news stories each day. Such sites could have a profound impact on public opinion, particularly in the lead-up to elections.

The concept of slop is distinct from “botshit,” another term used to describe false or misleading content generated by AI tools, or bots. While botshit is often created with the intent to deceive, slop is more about the sheer volume of low-quality content that is flooding the Internet, making it increasingly difficult for users to discern what is true and what is not.

The Ouroboros Effect

One of the most concerning aspects of the AI content crisis is the potential for AI systems to train on their own generated content, creating a vicious cycle of misinformation. Researchers have warned that as more AI-generated material is uploaded to the Internet, there is a risk that future AI systems will increasingly rely on this content for training, leading to a degradation in the quality of AI outputs over time.

This phenomenon, sometimes referred to as the “dead Internet theory,” suggests that an increasing portion of the Internet is becoming automated, leading to a recursive loop in which AI content begets more AI content. A study by researchers at the University of Toronto found that when AI systems were trained on AI-generated text, the quality of the output deteriorated rapidly, resembling a repetitive list of nonsensical information. This process is akin to making a photocopy of a photocopy, where the quality degrades with each iteration.

The implications of this recursive loop are profound and as AI continues to produce and consume its own content, the Internet could become inundated with increasingly low-quality and misleading information, making it more challenging for users to find reliable sources.

AI Content and the Danger of Blind Trust

As AI-generated content becomes more prevalent, the need for advanced detection tools and increased vigilance is becoming vital, and I hope that it can come as soon as possible.

The potential for AI to be used in manipulative and deceptive ways is growing, and without proper safeguards, the Internet could become a breeding ground for misinformation, which I hope, does not lead to any fatality. I mean this in the best way, especially after some news circling to Google’s very own AI Overview (not the widely used Gemini) that suggested users use glue in order to make the cheese in your pizza stick and even generated a response stating that geologists recommend humans to eat one rock per day which is pretty scary considering many people these days don’t do thorough research might blindly follow such advice if it seems authoritative.

So, for our readers, I have nothing else to advise but this: Do not trust 100% of whatever you’re reading or seeing online. And please, for the love of God, do some research before you actually decide to believe or even share anything you find online.

(0)

(0) (0)

(0)Archive

- October 2024(44)

- September 2024(94)

- August 2024(100)

- July 2024(99)

- June 2024(126)

- May 2024(155)

- April 2024(123)

- March 2024(112)

- February 2024(109)

- January 2024(95)

- December 2023(56)

- November 2023(86)

- October 2023(97)

- September 2023(89)

- August 2023(101)

- July 2023(104)

- June 2023(113)

- May 2023(103)

- April 2023(93)

- March 2023(129)

- February 2023(77)

- January 2023(91)

- December 2022(90)

- November 2022(125)

- October 2022(117)

- September 2022(137)

- August 2022(119)

- July 2022(99)

- June 2022(128)

- May 2022(112)

- April 2022(108)

- March 2022(121)

- February 2022(93)

- January 2022(110)

- December 2021(92)

- November 2021(107)

- October 2021(101)

- September 2021(81)

- August 2021(74)

- July 2021(78)

- June 2021(92)

- May 2021(67)

- April 2021(79)

- March 2021(79)

- February 2021(58)

- January 2021(55)

- December 2020(56)

- November 2020(59)

- October 2020(78)

- September 2020(72)

- August 2020(64)

- July 2020(71)

- June 2020(74)

- May 2020(50)

- April 2020(71)

- March 2020(71)

- February 2020(58)

- January 2020(62)

- December 2019(57)

- November 2019(64)

- October 2019(25)

- September 2019(24)

- August 2019(14)

- July 2019(23)

- June 2019(54)

- May 2019(82)

- April 2019(76)

- March 2019(71)

- February 2019(67)

- January 2019(75)

- December 2018(44)

- November 2018(47)

- October 2018(74)

- September 2018(54)

- August 2018(61)

- July 2018(72)

- June 2018(62)

- May 2018(62)

- April 2018(73)

- March 2018(76)

- February 2018(8)

- January 2018(7)

- December 2017(6)

- November 2017(8)

- October 2017(3)

- September 2017(4)

- August 2017(4)

- July 2017(2)

- June 2017(5)

- May 2017(6)

- April 2017(11)

- March 2017(8)

- February 2017(16)

- January 2017(10)

- December 2016(12)

- November 2016(20)

- October 2016(7)

- September 2016(102)

- August 2016(168)

- July 2016(141)

- June 2016(149)

- May 2016(117)

- April 2016(59)

- March 2016(85)

- February 2016(153)

- December 2015(150)