Snowflake, the Data Cloud company, has announced Snowflake Arctic, a state-of-the-art large language model (LLM) uniquely designed to be the most open, enterprise-grade LLM on the market.

With its unique Mixture-of-Experts (MoE) architecture, Snowflake Arctic delivers top-tier intelligence with unparalleled efficiency at scale. It is optimiaed for complex enterprise workloads, topping several industry benchmarks across SQL code generation, instruction following, and more.

In addition, Snowflake is releasing Arctic’s weights under an Apache 2.0 licence and details of the research leading to how it was trained, setting a new openness standard for enterprise AI technology. The Snowflake Arctic LLM is a part of the Snowflake Arctic model family, a family of models built by Snowflake that also include the best practical text-embedding models for retrieval use cases.

“This is a watershed moment for Snowflake, with our AI research team innovating at the forefront of AI,” said Sridhar Ramaswamy, CEO at Snowflake. “By delivering industry-leading intelligence and efficiency in a truly open way to the AI community, we are furthering the frontiers of what open source AI can do. Our research with Arctic will significantly enhance our capability to deliver reliable, efficient AI to our customers.”

Snowflake Arctic Breaks Ground with Truly Open, Widely Available Collaboration

According to a recent report by Forrester, approximately 46 percent of global enterprise AI decision-makers noted that they are leveraging existing open source LLMs to adopt generative AI as a part of their organisation’s AI strategy. With Snowflake as the data foundation for more than 9,400 companies and organisations around the world, it is empowering all users to leverage their data with industry-leading open LLMs, while offering them flexibility and choice with what models they work with.

Now with the launch of Arctic, Snowflake is delivering a powerful, truly open model with an Apache 2.0 licence that permits ungated personal, research, and commercial use. Taking it one step further, Snowflake also provides code templates, alongside flexible inference and training options so users can quickly get started with deploying and customiaing Arctic using their preferred frameworks.

These will include NVIDIA NIM with NVIDIA TensorRT-LLM, vLLM, and Hugging Face. For immediate use, Snowflake Arctic is available for serverless inference in Snowflake Cortex, Snowflake’s fully managed service that offers machine learning and AI solutions in the Data Cloud. It will also be available on Amazon Web Services (AWS), alongside other model gardens and catalogues, which will include Hugging Face, Lamini, Microsoft Azure, NVIDIA API catalogue, Perplexity, Together AI, and more.

Snowflake Arctic Provides Top-Tier Intelligence with Leading Resource-Efficiency

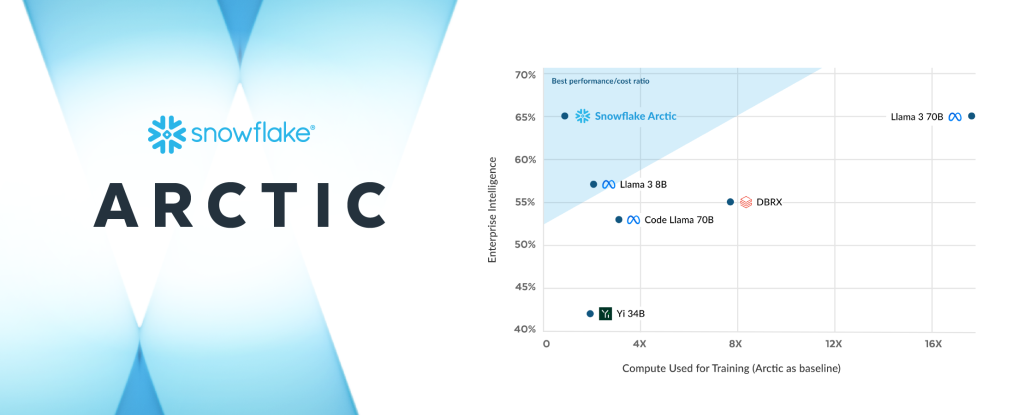

Snowflake’s AI research team, which includes a unique composition of industry-leading researchers and system engineers, took less than three months and spent roughly one-eighth of the training cost of similar models when building Arctic.

Trained using Amazon Elastic Compute Cloud (Amazon EC2) P5 instances, Snowflake is setting a new baseline for how fast state-of-the-art open, enterprise-grade models can be trained, ultimately enabling users to create cost-efficient custom models at scale.

As a part of this strategic effort, Arctic’s differentiated MoE design improves both training systems and model performance, with a meticulously designed data composition focused on enterprise needs. Arctic also delivers high-quality results, activating 17 out of 480 billion parameters at a time to achieve industry-leading quality with unprecedented token efficiency.

In an efficiency breakthrough, Snowflake Arctic activates roughly 50 percent less parameters than DBRX, and 75 percent less than Llama 3 70B during inference or training. In addition, it outperforms leading open models including DBRX, Mixtral-8x7B, and more in coding (HumanEval+, MBPP+) and SQL generation (Spider), while simultaneously providing leading performance in general language understanding (MMLU).

Snowflake Continues to Accelerate AI Innovation for All Users

Snowflake continues to provide enterprises with the data foundation and cutting-edge AI building blocks they need to create powerful AI and machine learning apps with their enterprise data. When accessed in Snowflake Cortex, Arctic will accelerate customers’ ability to build production-grade AI apps at scale, within the security and governance perimeter of the Data Cloud.

In addition to the Arctic LLM, the Snowflake Arctic family of models also includes the recently announced Arctic embed, a family of state-of-the-art text embedding models available to the open source community under an Apache 2.0 license. The family of five models is available on Hugging Face for immediate use and will soon be available as part of the Snowflake Cortex embed function (in private preview).

These embedding models are optimised to deliver leading retrieval performance at roughly a third of the size of comparable models, giving organisations a powerful and cost-effective solution when combining proprietary datasets with LLMs as part of a Retrieval Augmented Generation or semantic search service.

Snowflake also prioritises giving customers access to the newest and most powerful LLMs in the Data Cloud, including the recent additions of Reka and Mistral AI’s models. Moreover, Snowflake recently announced an expanded partnership with NVIDIA to continue its AI innovation, bringing together the full-stack NVIDIA accelerated platform with Snowflake’s Data Cloud to deliver a secure and formidable combination of infrastructure and compute capabilities to unlock AI productivity.

Snowflake Ventures has also recently invested in Landing AI, Mistral AI, Reka, and more to further Snowflake’s commitment to helping customers create value from their enterprise data with LLMs and AI.

(0)

(0) (0)

(0)Archive

- October 2024(44)

- September 2024(94)

- August 2024(100)

- July 2024(99)

- June 2024(126)

- May 2024(155)

- April 2024(123)

- March 2024(112)

- February 2024(109)

- January 2024(95)

- December 2023(56)

- November 2023(86)

- October 2023(97)

- September 2023(89)

- August 2023(101)

- July 2023(104)

- June 2023(113)

- May 2023(103)

- April 2023(93)

- March 2023(129)

- February 2023(77)

- January 2023(91)

- December 2022(90)

- November 2022(125)

- October 2022(117)

- September 2022(137)

- August 2022(119)

- July 2022(99)

- June 2022(128)

- May 2022(112)

- April 2022(108)

- March 2022(121)

- February 2022(93)

- January 2022(110)

- December 2021(92)

- November 2021(107)

- October 2021(101)

- September 2021(81)

- August 2021(74)

- July 2021(78)

- June 2021(92)

- May 2021(67)

- April 2021(79)

- March 2021(79)

- February 2021(58)

- January 2021(55)

- December 2020(56)

- November 2020(59)

- October 2020(78)

- September 2020(72)

- August 2020(64)

- July 2020(71)

- June 2020(74)

- May 2020(50)

- April 2020(71)

- March 2020(71)

- February 2020(58)

- January 2020(62)

- December 2019(57)

- November 2019(64)

- October 2019(25)

- September 2019(24)

- August 2019(14)

- July 2019(23)

- June 2019(54)

- May 2019(82)

- April 2019(76)

- March 2019(71)

- February 2019(67)

- January 2019(75)

- December 2018(44)

- November 2018(47)

- October 2018(74)

- September 2018(54)

- August 2018(61)

- July 2018(72)

- June 2018(62)

- May 2018(62)

- April 2018(73)

- March 2018(76)

- February 2018(8)

- January 2018(7)

- December 2017(6)

- November 2017(8)

- October 2017(3)

- September 2017(4)

- August 2017(4)

- July 2017(2)

- June 2017(5)

- May 2017(6)

- April 2017(11)

- March 2017(8)

- February 2017(16)

- January 2017(10)

- December 2016(12)

- November 2016(20)

- October 2016(7)

- September 2016(102)

- August 2016(168)

- July 2016(141)

- June 2016(149)

- May 2016(117)

- April 2016(59)

- March 2016(85)

- February 2016(153)

- December 2015(150)